The crucial role of quality engineering in software development

Quality Engineering (QE) is a vital component for successful software development and testing. Not only does it impact the technical aspects of IT delivery, but it also plays a crucial role in delivering value to customers and meeting business objectives. Through the application of quality engineering, both the IT team and stakeholders collaborate in taking shared responsibility to consistently deliver IT systems of the right quality at the right moment.

In this blog, I will share a six-phase implementation method of QE that has proven successful in achieving seamless IT delivery. It is based on my experience as a test lead and quality engineer for one of the teams I have worked for.

A value-driven journey from concept to production

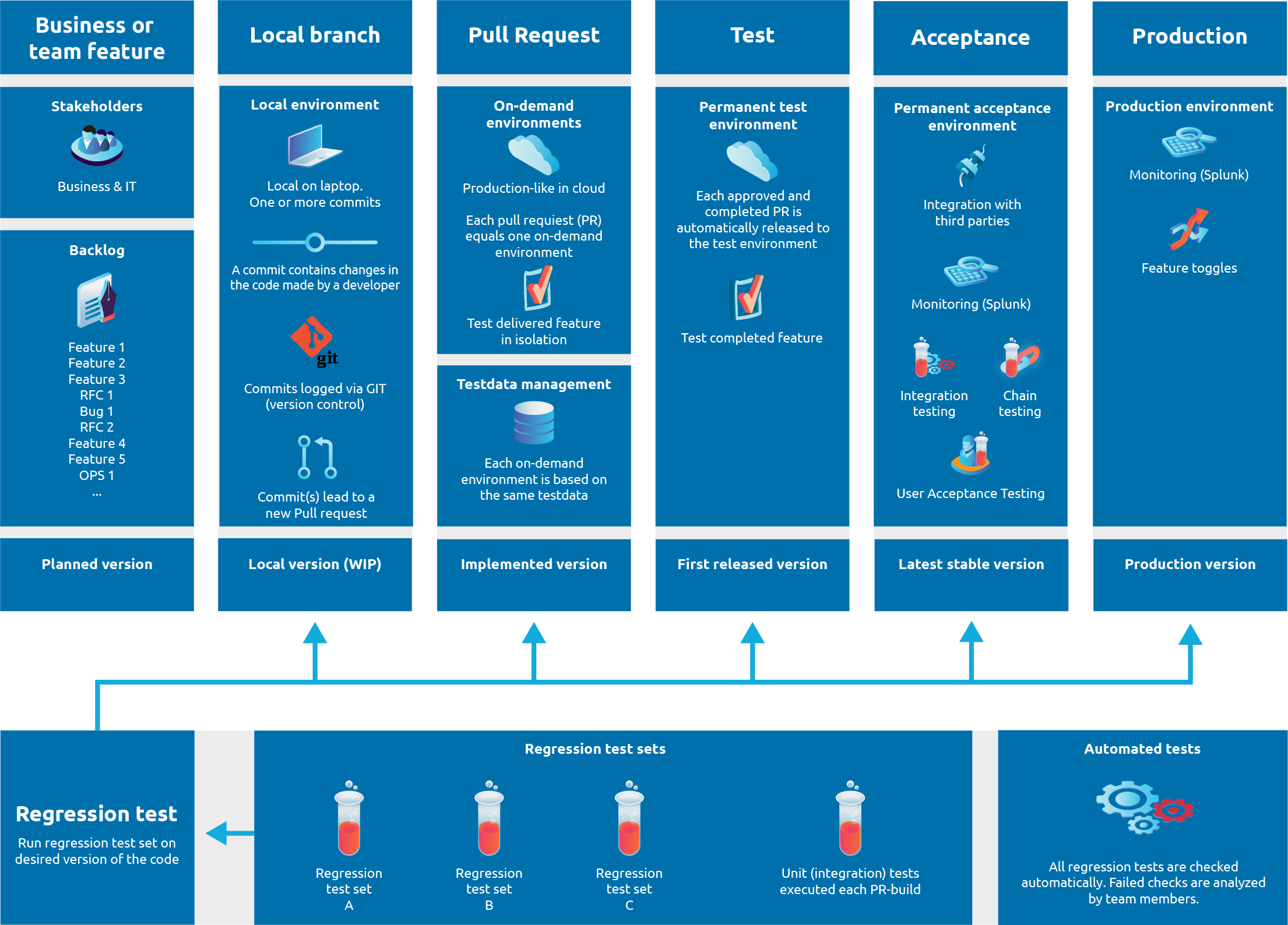

My teams' implementation of Quality Engineering (QE) prioritized the seamless flow of value from the concept phase to production. We recognized that each phase in this flow required specific quality measures tailored to our needs (refer to the QE strategy template for more quality measures). Before I delve further into this blogpost, I would like to share my personal definition of a quality engineering value flow.

To me, the quality engineering value flow represents a connected series of phases, where value flows from concept to production. Throughout this flow, you should employ both automated and manual quality measures to ensure deliverables of the right value, delivered with speed and confidence. The key to success lies in crafting these measures to fit your unique context. Just like test automation, there is no one-size-fits-all solution. In this blog, I will provide you with examples of such automated and manual measures. The core of this strategy is driven by on-demand environments, which accelerated our software delivery.

As implied by quality engineering, team members and stakeholders share the responsibility for success within the value flow. It is crucial to maintain a balance. Excessive focus on delivering business value can hinder the development team's long-term efficiency. Conversely, prioritizing features for development might leave stakeholders waiting for essential value. A successful QE value flow empowers a sustainable equilibrium, ensuring both parties remain content and confident in delivering value to their stakeholders.

The power of cloud native architecture for agile development and testing

In today's fast-paced world, modern cloud native architecture presents us with exciting possibilities for achieving successful development and testing. Within our team, we explored these possibilities, which led us to create a proof of concept that revolutionized our approach. One aspect that particularly stood out for us was the concept of on-demand environments.

Simply put, on-demand environments allow us to swiftly spin up production-like environments, complete with all the necessary cloud services, including a database and frontend. I will delve deeper into this topic later in the blog.

Six value phases

In our specific context, I identify six distinct phases in which value is added. Typically, this value takes the form of "code" that transitions from a planned phase to production. In our case, nearly every item on the backlog is delivered in code, system features, infrastructure enhancements, and even test data. Consequently, value is defined as code that holds significance for both the customer and/or the development team. It is important to acknowledge that not all code reaches production. This is because the value it provides is realized in an earlier phase. For instance, code utilized for automated checks within an automated regression set or test data. The six distinct value phases we identify are as follows:

- Planned version of the code

- Local version of the code

- Implemented version of the code

- First released version of the code

- Latest stable version of the code

- Production version of the code

In the subsequent sections, I will explore each phase in detail and explain their significance within our value delivery process.

Planned version

The initial phase I identify is the planning stage, where the development team, alongside the business, acts as a stakeholder for the system under development. While the implementation of new features, changes, and bug fixes directly enhances value for the business and its stakeholders, it is important to recognize that the development team brings an additional dimension of value. By focusing on aspects such as robustness, maintainability, and scalability, the development team lays the groundwork for a sustainable and efficient system that supports future changes by striving for increased agility to process evolving business needs. Another example is testability, which improves the ability to determine whether the product is fit for purpose.

Backlog items flow into the team from two sources: internal and external. Internal items could be implementing automation solutions, such as automated test data seeding or automated checks, refactoring efforts, or improving the CI/CD implementation. External backlog items primarily revolve around business-driven features.

Together, these planned items are maintained on the backlog, and the product owner has the responsibility of prioritizing them. As a development team, it is crucial to emphasize to the product owner the importance of prioritizing ‘internal’ items. As these tasks are essential for building and maintaining a healthy system with the right quality, aligning with the standards upheld by your team. Neglecting them may jeopardize the team's ability to deliver quality at speed, ultimately harming the value for everyone involved.

Local version

Once the planned items are picked up, our team starts coding and implementing. Typically, this starts on a local machine or laptop. To effectively track code modifications, we rely on Git, a distributed version control system, following a trunk-based development approach. This allows us to track changes within the codebase and seamlessly merge them with the rest of the code. As team members make modifications, additions, or deletions to the code, they push individual commits or groups of commits using git's feature branch functionality.

Upon completion, the developer gathers all the commits from the feature branch and initiates a pull request, which is the starting point for our next phase in the QE value flow. This next phase is where the potential of on-demand environments truly shines.

Implemented version

For us, a pull request is an implemented version of the code, ready for building and testing. It also serves as the starting point for our on-demand environments. Once the pull request is created, an automated pipeline is triggered. This initiates the deployment of the software on a production-like environment, hosted on our cloud provider (AWS). It is worth noting that running unit tests before the creation of an on-demand environment is a good practice, ensuring that any potential issues are identified and resolved prior to deployment.

When this fresh on-demand environment is created you can do multiple things with it. You can seed it with test data, run automated regression checks, use it as a test or demonstration environment, or even as a playground for your end users.

On-demand environments bring a range of benefits. Firstly, they are production-like by employing similar cloud components. Secondly, they offer controlled and predicable test data, enabling reliable tests. Thirdly, they operate independently from other on-demand environments, avoiding potential interference. Moreover, the environments can be accessed by any stakeholder you like. Lastly, they allow us to test new features in isolation, ensuring optimal quality before integration into the subsequent phase. Personally, one of my favorites, as we can thoroughly test and learn from the code changes, establishing confidence before releasing them to the next phase.

When you have achieved the goal of your on-demand environment -typically a tested feature or bugfix- we complete the pull request. This action will automatically remove the corresponding on-demand environment, including the database and all cloud components that were created. This means that all our on-demand environments are temporary. They exist for as long as they serve their purpose. Furthermore, completing the pull request leads to the merging of the feature branch with the main branch. Which brings us to the next phase in our QE value flow.

First released version

This step is all about releasing the implemented code to a permanent test environment. This phase in your QE value flow is a vital checkpoint to verify whether the release works as intended on an existing environment. This is different from the on-demand environments since those are freshly generated. During this stage, certain aspects that were not testable in the on-demand environment come into focus. For instance, adding new tables or columns to the database may lead to unforeseen issues. Additionally, there are other reasons to have a permanent test environment, such as testing third party integrations.

Retaining a traditional test environment proves valuable for additional (integration) testing and carrying out checks that cannot be done in on-demand environments. It also provides an opportunity to perform regression testing on an updated environment, further reducing the likelihood of production incidents. Nonetheless, it is important to note that the need of a permanent test environment depends on your specific context.

Latest stable version

The combined result of the first four phases in the quality engineering process ensures the availability of consistently releasable version of the code. This strategy leads to high confidence, and low uncertainty. Gone are the days of worrying about whether your team can release or not. All completed work can be released at any moment, be it continuously, on-demand or iteratively.

In many cases, teams refer to this release as the User Acceptance Testing (UAT) environment. Traditionally used for end user-driven acceptance testing, this environment also serves well for conducting integration- and end-to-end testing with other systems. Moreover, it offers an opportunity to establish monitoring mechanisms. By doing so, potential issues or risks can be caught beforehand and addressed before they escalate to production. Ultimately, this version of the code represents is the latest stable version of the code, ready for production release after approval.

Latest production version

During this release, the production environment receives the latest stable and approved version of the code. In our case we can release all feature pipelines, but also separately. Depending on our need. This modular approach enables the release of individual parts of the system rather than the entire system.

7Using modern cloud technologies, like serverless architecture, allows for seamless production releases without requiring downtime. However, the feasibility of this approach depends on your specific context, as certain applications may need specific release moments to avoid disrupting production processes. As a final step, a production-intake is advised.

Implementing monitoring on production is crucial for the team to be proactive. Our monitoring strategy serves two objectives: business and IT. It covers logging if technical errors, but also high-risk data quality checks to ensure good end-to-end flows within the chain. Any issue in data quality is immediately relayed to the relevant stakeholder(s).

This final step concludes our six-phase QE value flow. For an overview of all phases, refer to the following visual.

Supportive phase: Hybrid model for automated regression tests

After reading these six phases you might wonder how automated regression testing is integrated. Like every experienced consultant would say: that depends. Anything is possible: continuous, scheduled or on-demand. In our case, we adopted a hybrid model which supports our way-of-working. Note that this WoW is tailor-made within our specific context. To begin with, we run a weekly regression test targeting the high and medium risk components of the system. When the need arises for specific regression tests, we trigger the regression test pipeline to execute the desired set on a new on-demand environment. Furthermore, we enhance flexibility by running a specific automated regression test set on an already existing on-demand test environment. You can call this on-demand regression testing, or a form of testing-as-a-service.

Test automation tool stack

Which tools you use does not really matter. Use tools and frameworks that work for your specific context. The ideal tool depends on multiple factors, including development team’s experience, preferred programming language(s), architectural design of your entire system, customer requirements, and budget. Successful teams prioritize defining their automation (in testing) strategy before delving into tool selection.

Conclusion

Quality Engineering (QE) plays a crucial role in software development and testing, driving the delivery of systems with the right quality while meeting business objectives. In this blog, we explored an implementation of QE that has proven successful in achieving seamless IT delivery. However, it is important to note that this implementation is context-driven and serves as an inspiration for developing your own tailored QE solution.

The QE value flow represents a connected series of phases, where value flows from the concept to production. It involves both automated and manual quality measures to ensure right-quality-deliverables at speed and with confidence. The key to success lies in customizing your needs within these six phases.

Cloud native architecture offers exciting possibilities for agile development and testing. On-demand environments have emerged as a powerful tool, enabling swift creation of production-like environments, and facilitating various testing activities. These environments provide controlled and predictable test data, operate independently, and allow for thorough testing before integrating new features into subsequent development phases. Each phase contributes to the development and delivery of consistently high-quality code, ensuring confidence and low uncertainty in the release process.

In conclusion, a successful quality engineering value flow implementation requires tailoring the approach to suit the unique needs of your specific context. By leveraging on-demand environments, adopting a hybrid model for automated regression checks, and implement the appropriated quality measures, teams can achieve a seamless value flow to production, delivering systems with the right quality that meet customer expectations and drive business success.

All in all, I trust this blog gives a good overview of the possibilities that Quality Engineering can bring. Take a step back and reflect on your own context. What is working? What is not? Start some experiments. Take small steps. Fix bottlenecks. So, are you happy with your Quality at Speed? Talk about it with your stakeholders. And visualize your own QE value flow!

Published: 28 August 2023

Author: Boyd Kronenberg